Hi Team,

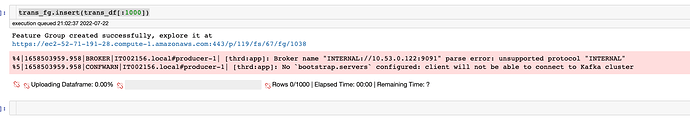

I have currently installed version 3.0 of hopsworks-installer.sh and the installation was successful. I am able to login into the hops works UI. Every service is working fine as it should. However when I try to connect my hops works server using my local conda python environment. I am successfully able to complete the login and able to see the projects. However when I create the feature groups and try to upload the data then the problem occurs. The feature groups start to come up in the UI but no data is present. I don’t get any errors but warnings are attached.

Can someone please help with this?

I solved this problem by going into sudo cat /srv/hops/kafka/config/server.properties and changing the advertises listener property: change the IP of Interal://privateip to Internal//PublicIp

Hi FireHawkl.

Yes, you fixed it.

There is a new flag in hopsworks-installer.sh to set the public IP of the head node, that would achieve the same as you do by updating kafka’s server.properties file:

[-kip|–kafka-public-ip) ip_address] Public IP address of the Kafka broker (typically the head VM).

I think we should make it mandatory for people to enter it using hopsworks-installer.sh, rather than optional as it is now.

Hey @Jim_Dowling

Thanks for replying back. Yes when i ran the installer file i opted for interactive input based installer and when installing it asked for kafka broker ip and I provided the ip as well. Fyi this setup in on ec2 instance. I was trying to upload data from my local system engine is python. There i faced this issue. I did two things first change the ip in kakfa server properties and then while running .insert() method i also pass argument where it specify internal True which removes the INTERNAL:// tag from the url. After this it worked.

Can you please tell me is this the right way to do it because everytime when i upload i need to pass the true flag in dict as argument for insert method while creating a feature group?

Im having similar error where I get this

%3|1682351069.758|FAIL|Kevorks-MacBook-Pro.local#producer-5| [thrd:ssl://3.145.101.110:9092/bootstrap]: ssl://3.145.101.110:9092/1: 32 request(s) timed out: disconnect (after 58338ms in state UP)

What should i do to solve it?